Methodology

Technical documentation for the AeroCastaña analysis pipeline

Overview

AeroCastaña is an AI-enabled analysis pipeline designed to support sustainable Brazil nut (Bertholletia excelsa) management by transforming drone imagery into spatially explicit information on tree crowns, Castaña fruit (coco) presence, and per-tree fruit counts. The system is built as a decision-support tool for Brazil nut concession owners, cooperatives, NGOs, and funders, with the goal of increasing operational efficiency and profitability while maintaining transparency and ecological responsibility.

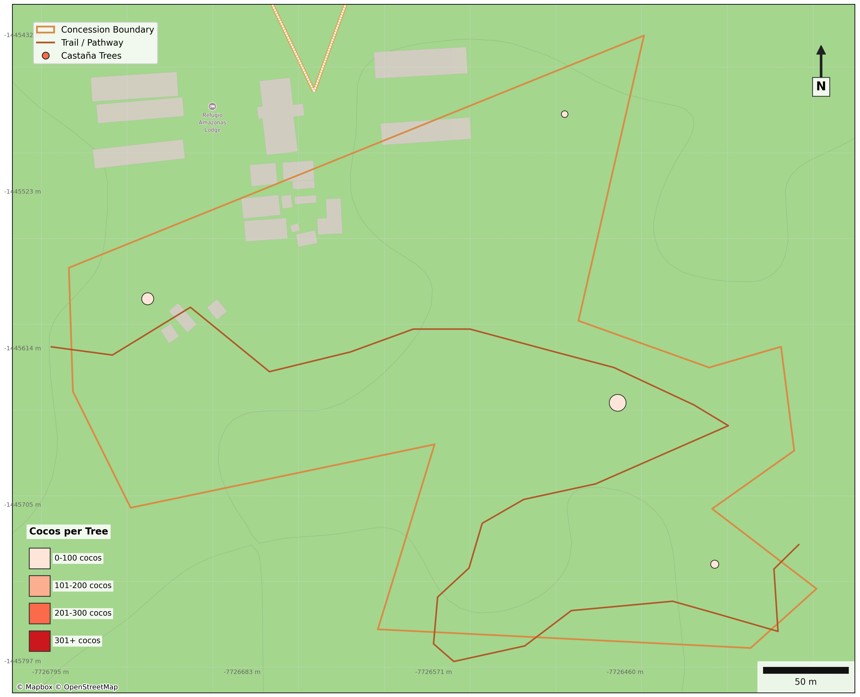

At its core, AeroCastaña provides geolocated maps of individual Brazil nut trees, paired with per-tree coco detections and experimental estimates of harvest volume at both the tree and concession scale. These outputs allow users to prioritize harvest areas, plan labor and logistics, and evaluate seasonal productivity using traceable, repeatable evidence derived directly from aerial data.

The methodology described here reflects the current operational state of the pipeline. While tree and fruit detection are fully functional, yield estimation remains an active area of development, with conversion factors between detected cocos and harvested weight being refined through ongoing multi-year field measurements. All known trees used for model training and validation are ground-truthed using field knowledge and GPS data.

Looking forward, AeroCastaña is being developed not only as a monitoring tool for existing concessions, but also as a framework for exploring and evaluating proposed or under-documented concessions, where limited field data exist and remote assessment can support planning, conservation alignment, and investment decisions.

Data Acquisition

Drone Imagery

All analyses are based on high-resolution RGB imagery collected using consumer-grade drones (e.g., DJI Air 2S, DJI Air 3). Flights are conducted at approximately 100 meters above ground level, resulting in a ground sampling distance (GSD) of ~2.1 cm per pixel, which is sufficient to resolve individual Brazil nut tree crowns and visible Castaña fruit (cocos) within the canopy.

Imagery is collected using automated, waypoint-based flight missions to ensure consistent altitude, overlap, and spatial coverage across surveys. This standardized acquisition approach supports repeatable analyses across time and space, enabling comparison between sites and seasons as additional data are incorporated.

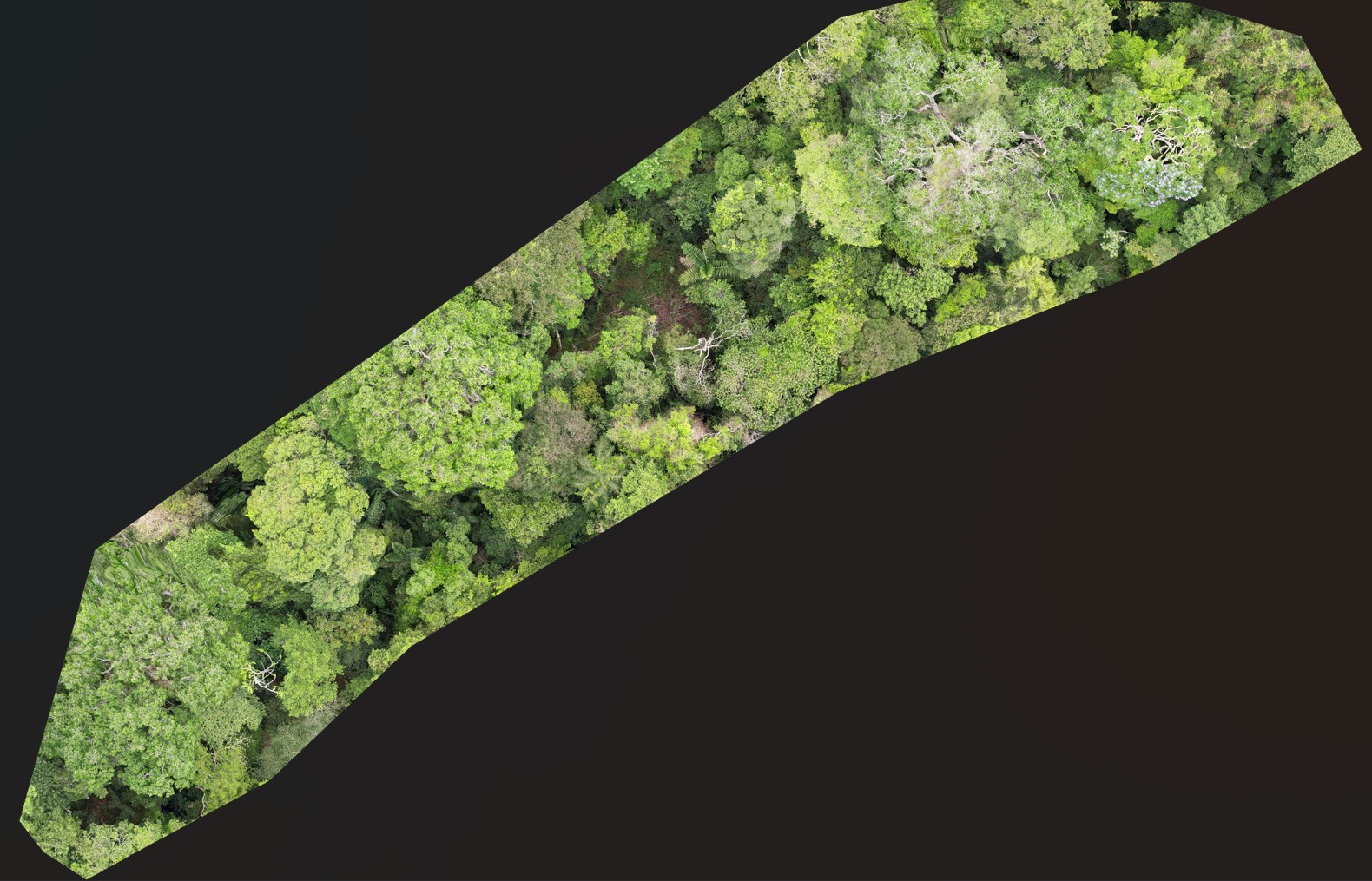

Drone imagery acquisition over a Brazil nut concession

Study Context and Source Data

Training and validation data for AeroCastaña originate from the Wired Amazon Aerobotany Project in the Madre de Dios region of southeastern Peru. Aerobotany is a long-running research initiative founded in 2016 by Dr. Varun Swamy and Daniel Couceiro, focused on using unmanned aerial vehicles (UAVs) to study the Amazon rainforest canopy—one of the least accessible yet most ecologically important forest layers on Earth.

The project is based near the Tambopata National Reserve, within and adjacent to managed forests characterized by exceptionally large, long-lived canopy trees, including Brazil nut (Bertholletia excelsa), Ceiba (Ceiba pentandra), and shihuahuaco (Dipteryx micrantha). These forests have long histories of sustainable use and protection, making them well suited for integrating remote sensing, field-based ecological knowledge, and long-term monitoring.

Aerobotany's drone surveys provide unobstructed, repeatable views of the upper canopy, capturing crown structure, phenological signals, and visible fruiting patterns that are difficult or impossible to observe from the ground. These data—combined with ground-truthed tree locations and field knowledge—form the foundation for training and validating AeroCastaña's tree and fruit detection models.

Watch the Aerobotany team in action, conducting drone surveys over the Tambopata canopy:

Ground Truth and Reference Data

Known Brazil nut trees used for model training are fully ground-truthed using a combination of field expertise and GPS measurements. These reference points serve two primary purposes:

- Anchoring detected Brazil nut cocos to verified individual tree locations, allowing fruit counts to be aggregated at the tree level

- Providing a spatial reference for validating model outputs and ensuring detections correspond to known, harvestable trees

Ground-truthed tree locations establish the spatial framework that links canopy-level fruit detections to individual trees, enabling per-tree summaries and concession-scale analyses.

Tree Detection (Experimental)

Current Role in the Pipeline

At present, AeroCastaña does not perform automated detection of Brazil nut trees from imagery alone. Instead, the pipeline relies on a user-provided shapefile of known Brazil nut tree locations as a required input. These known points are spatially matched to the GPS metadata of nadir drone imagery, allowing detected Castaña cocos to be associated with specific trees during analysis.

This design choice reflects current priorities: maximizing reliability and interpretability of fruit-level detections while grounding outputs in verified, harvestable trees.

Experimental Tree Detection and Crown Segmentation

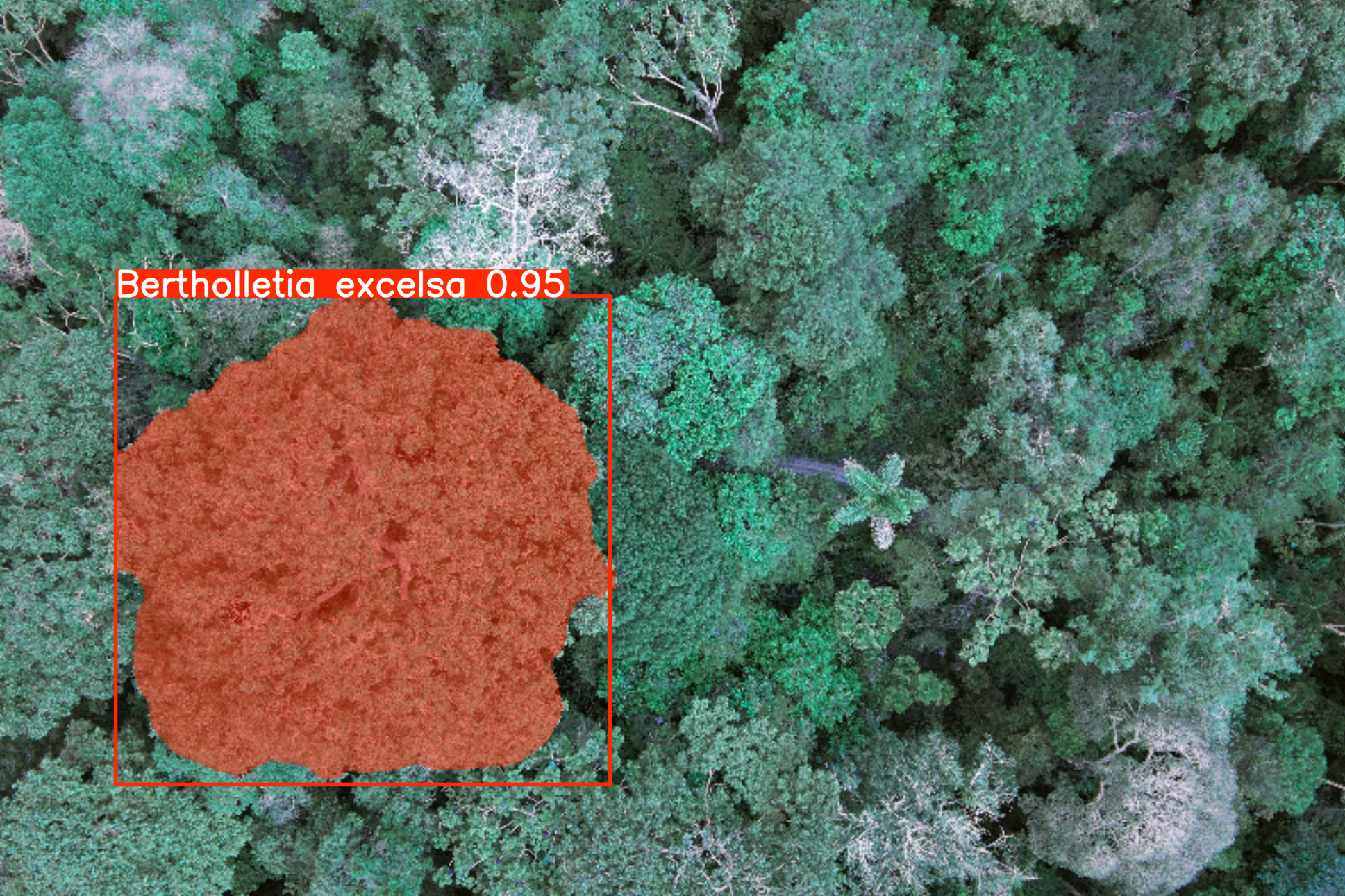

In parallel, experimental models are being developed to detect and segment Brazil nut tree crowns directly from RGB drone imagery. These models aim to identify canopy-scale visual patterns characteristic of mature Bertholletia excelsa trees and to delineate crown extents under real-world forest conditions.

If validated, tree detection would serve several complementary functions:

- Reducing reliance on pre-existing GPS tree inventories, potentially eliminating the requirement to upload known tree point shapefiles

- Improving fruit detection validity by spatially constraining coco detections to plausible crown regions and filtering out erroneous detections

- Enabling tree-level analysis in unmapped or partially mapped areas

Experimental crown detection visualization

Future Applications

Reliable tree detection would substantially expand the scope of AeroCastaña. In addition to supporting existing concessions, it would enable large-area aerial surveys to identify Brazil nut trees across extensive forest landscapes. This capability could be used to:

- Assess the density and distribution of Brazil nut trees in previously unsurveyed forests

- Support the proposal and planning of new Brazil nut concessions

- Contribute to the expansion of economically viable, non-timber forest management systems that incentivize long-term forest protection

By lowering the barrier to surveying and mapping Brazil nut resources at scale, this future capability aligns directly with conservation goals that link livelihoods, monitoring, and the expansion of protected Amazonian forests.

Fruit (Coco) Detection

Fruit detection is the operational core of AeroCastaña. The system uses a custom-trained YOLO11 object detection model to identify visible Castaña cocos in drone imagery. The model was trained on manually annotated cocos across known Brazil nut trees and validated through iterative field comparison.

Detection is performed on orthorectified imagery at the original ground sampling distance (GSD) of ~2.1 cm/pixel. Each detected coco is assigned a bounding box, confidence score, and geographic coordinates. Detections are then spatially joined to ground-truthed tree locations, enabling per-tree fruit count summaries.

Model performance continues to improve as additional training examples are incorporated, particularly for edge cases such as partially obscured cocos, off-nadir viewing angles, and variable lighting conditions.

Detected Brazil nut fruits (cocos) associated with known tree locations

The fruit detection models are optimized for small-object detection in high-resolution RGB imagery, where cocos may be partially occluded, unevenly distributed, or visible only from specific viewing angles.

By anchoring detections to verified Brazil nut tree locations, the pipeline reduces biologically implausible detections and enables fruit counts to be aggregated at the per-tree and per-concession levels, supporting downstream estimation and planning workflows.

Yield Estimation (Experimental)

AeroCastaña provides experimental estimates of harvest volume at both the tree and concession scale by applying empirical conversion factors to detected coco counts. These conversion factors link:

- Visible cocos in the canopy → Total cocos produced per tree (accounting for occlusion)

- Cocos produced → Harvested weight in kilograms

Conversion factors are being refined through ongoing multi-year field measurements that track the relationship between canopy observations, ground collections, and final harvest outcomes. Validation is conducted in partnership with local concession holders who provide ground-truth harvest data.

At present, yield estimates should be interpreted as order-of-magnitude approximations intended to support comparative prioritization (e.g., identifying high-producing vs. low-producing trees or zones). As additional field data are incorporated, the precision and reliability of these estimates will continue to improve.

Model Training and Architecture

The YOLO11 fruit detection model was trained using a dataset of manually annotated drone imagery collected across multiple Brazil nut concessions and survey dates. Training images were captured under varied lighting, phenological, and canopy conditions to maximize model generalization.

All training annotations were validated by field experts with direct knowledge of individual tree identities and fruiting patterns. Model hyperparameters were tuned to balance precision (minimizing false positives) and recall (minimizing missed cocos), with performance monitored using standard object detection metrics (mAP, precision-recall curves).

The model is periodically retrained as new ground-truthed imagery becomes available, ensuring that detection performance continues to improve and adapt to new sites, seasons, and acquisition conditions.

Rather than optimizing exclusively for benchmark scores, model development prioritizes robustness, interpretability, and consistency under real-world survey conditions encountered during concession monitoring.

Training configuration (technical details)

Current fruit detection models are trained using high-capacity YOLO architectures (YOLO11x), consisting of approximately 57 million parameters and operating at input resolutions optimized for small-object detection in high-resolution canopy imagery.

Training is conducted using cloud-based NVIDIA A100-class GPUs, enabling extended training schedules and large input sizes. The most recent training runs were performed on datasets containing 4,853 annotated fruit instances, with validation conducted using standard object detection metrics.

Quantitative performance metrics and known limitations are discussed in the Model Limitations section.

Inference Pipeline

The AeroCastaña inference pipeline is fully automated and operates as follows:

Required inputs

Drone imagery folder

A directory of nadir RGB images collected at the appropriate flight height, with embedded GPS metadata.

Known Brazil nut tree locations

A point vector file (e.g., Shapefile or GeoPackage) containing ground-truthed Castaña tree locations used to anchor fruit detections spatially.

Optional spatial inputs

Concession boundary polygon

A polygon file defining the concession extent. When provided, this boundary is used to clip outputs and contextualize results in maps.

If no boundary is supplied, the pipeline automatically generates a provisional concession extent by buffering the known tree locations.

Trail network (lines)

A line vector file representing footpaths or access trails within the concession. Trails are included in the final maps to support harvest planning and field navigation.

Drone survey for orthomosaic generation

A folder containing a structured drone survey can be supplied to generate an orthomosaic using OpenDroneMap (ODM). The resulting orthomosaic is clipped to the concession boundary and included as a basemap layer in the outputs.

Processing steps

- Drone imagery is spatially indexed using GPS metadata.

- Known tree locations are matched to overlapping images based on spatial proximity.

- Fruit (coco) detection models are applied to relevant imagery.

- Detections are aggregated at the per-tree and per-concession levels.

- Spatial layers, summary tables, annotated imagery, and offline maps are generated.

Once inputs are provided, the entire workflow executes automatically, producing outputs that can be reviewed, validated, and used directly in the field or in reporting.

Outputs

AeroCastaña produces multiple output formats designed for integration with field logistics, GIS analysis, and decision-making workflows:

GeoPackage (GPKG)

Layered spatial data including tree detections, harvest zones, and concession boundaries

CSV Tables

Tree counts, imagery coverage metrics, and quality assurance flags suitable for reporting to funders

Annotated JPG Imagery

Detection overlays on orthomosaics for field verification

Offline HTML Map

An interactive map cached for use on laptops and tablets in low-connectivity environments

Printable Maps (PDF & PNG)

Static print-ready maps in both PDF and PNG formats for reporting and field use

Orthomosaic (Optional)

If a structured drone survey is provided, the pipeline generates an orthomosaic of the concession and includes it in the offline map and outputs.

Coco detections overlaid on orthomosaics for rapid field verification.

Cached HTML map designed for use on laptops and tablets in low-connectivity environments.

Static, print-ready maps for reporting, planning, and field operations.

Drone-derived orthomosaic generated from structured surveys and integrated into all spatial outputs.

All outputs are designed to be interoperable with standard GIS and reporting workflows.

Automation and User Inputs

The pipeline is designed for minimal manual intervention. Once imagery is uploaded, all processing—including orthorectification, detection, spatial joining, and output generation—occurs automatically. Users receive email notifications when jobs are complete.

This automation enables rapid turnaround for seasonal assessments and supports use cases where timely information is critical, such as harvest planning under tight logistical windows.

Ethics, Data Ownership, and Responsible Use

AeroCastaña is built with a commitment to transparency, reproducibility, and respect for local knowledge. The system does not replace field expertise; rather, it complements traditional knowledge by providing a scalable, traceable layer of evidence for decision-making.

All training data are collected in partnership with local communities and concession holders. Data stewardship policies ensure that imagery and outputs are shared responsibly and that communities retain control over how their data are used and disseminated.

Looking forward, AeroCastaña is designed to support equitable access to remote sensing technology, with the goal of empowering forest stewards—particularly in under-resourced regions—to make evidence-based decisions that align conservation, livelihoods, and long-term sustainability.

Ongoing Development

AeroCastaña remains in active development. Priority areas for future work include:

- Refining yield estimation through expanded field validation

- Operationalizing automated tree detection for inventory applications

- Expanding to new sites and Brazil nut-producing regions

- Integrating trail and access data for logistics optimization

- Exploring multi-year phenology tracking and productivity trends

Development is guided by ongoing collaboration with field practitioners, conservation scientists, and Brazil nut producers.